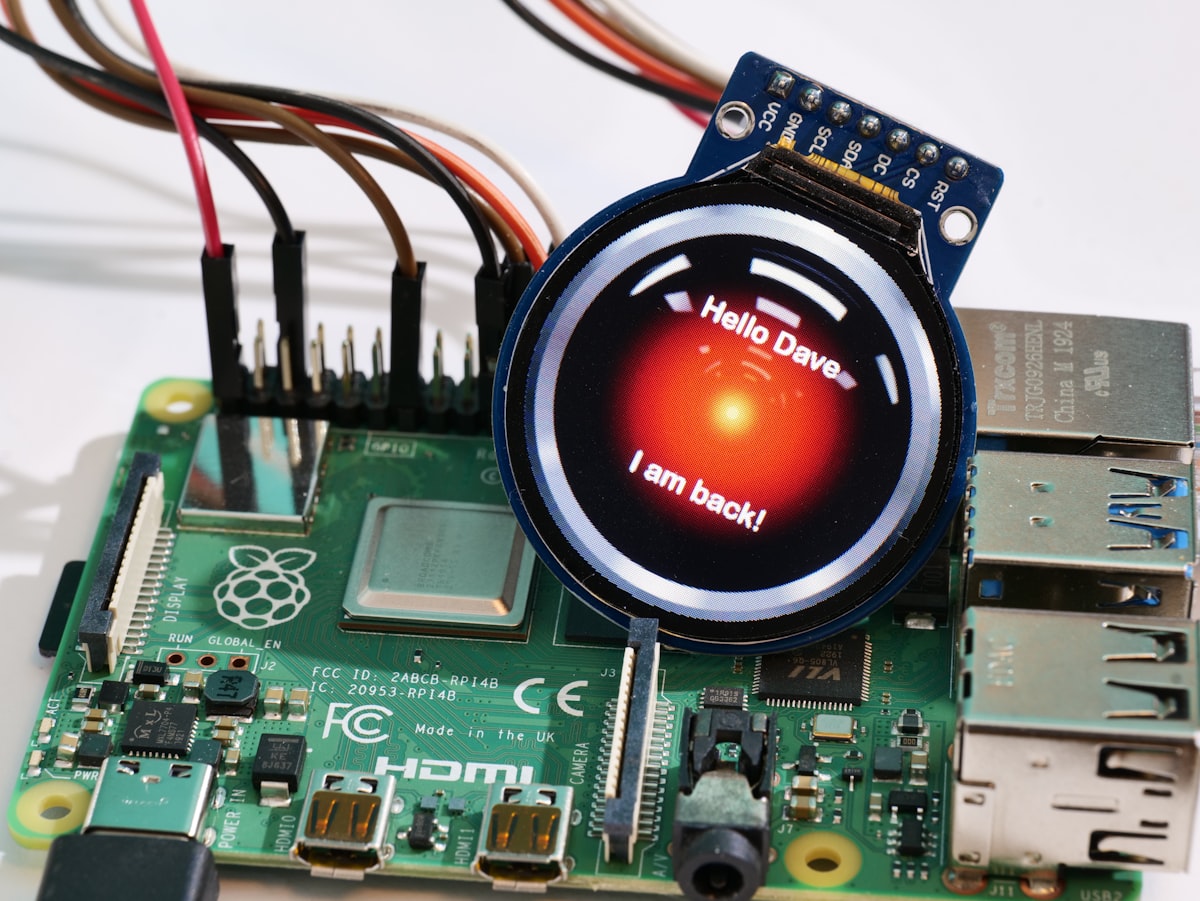

Chatbots as the simplest first step in an AI world? Think again!

Most multinationals are rushing to implement AI-powered chatbots as the simplest way to demonstrate they're doing something about AI. And that's fair, as we all recently discovered the power of a fluid (yet automated) conversation with an AI agent in one way or another.

What we also rapidly learned is how unreliable they still are. There's even a now-consecrated term to describe how a large language model AI will answer any question quite assertively, whether it has a proper answer. The term is 'hallucination.' And lo and behold, Air Canada just got into a tussle with a passenger over a bereavement travel refund.

Here's the drama: the customer consulted Air Canada's chatbot for info on bereavement rates, and the chatbot gave him the wrong guidelines. It told him to book a flight and claim a refund within 90 days. But surprise, surprise, Air Canada's actual policy said no refunds for bereavement travel once booked.

Now, Air Canada, instead of owning up, pulled a wild move. They claimed the chatbot was basically a rogue agent, a separate legal entity responsible for its own actions. Even better, they argued customers shouldn't trust the chatbot... Eventually, the issue was brought to Canada's Civil Resolution Tribunal, and as you can expect, the tribunal didn't buy Air Canada's defense. They slammed the airline for not taking "reasonable care" to ensure the chatbot was accurate and reimbursed the passenger.

The airline didn't lose millions over this; they mostly look silly and somehow incompetent. It's still a cautionary tale about how what seems an easy AI/innovation checkbox to deal with (implementing a better chatbot for basic customer service) is far from an obvious task to deal with.

You could probably compare this kind of misadventure to how the industry felt self-driving cars would rapidly take over in 2018, as the technology was already mostly there.

Mostly.

We should remember that the last few percent of improvements to make any technology robust enough for wide public use are not covered in a linear way. Getting from 98 to 99.8% safety and reliability often takes as much time as getting from 20 to 80%.

Lesson learned? Probably not.