Substack, just another Ponzi scheme on society

It's 2023, and we still haven't learned much about social media or what is called in Silicon Valley lingo "tech" platforms.

We got used to personal data leaks, bots posing as your friends, election meddling, hate speech, and far more. From Facebook to Twitter or TikTok, the assumption is that boys will be boys for tech entrepreneurs. Going through an adolescent phase where their business needs to be scrappy, moving fast, and breaking a few rules along the way to get to a stable state is normal. Not normal, as in it conforms to acceptable societal behavior patterns, but normal, as in a statistical average.

And there are reasons for that.

These reasons are financial.

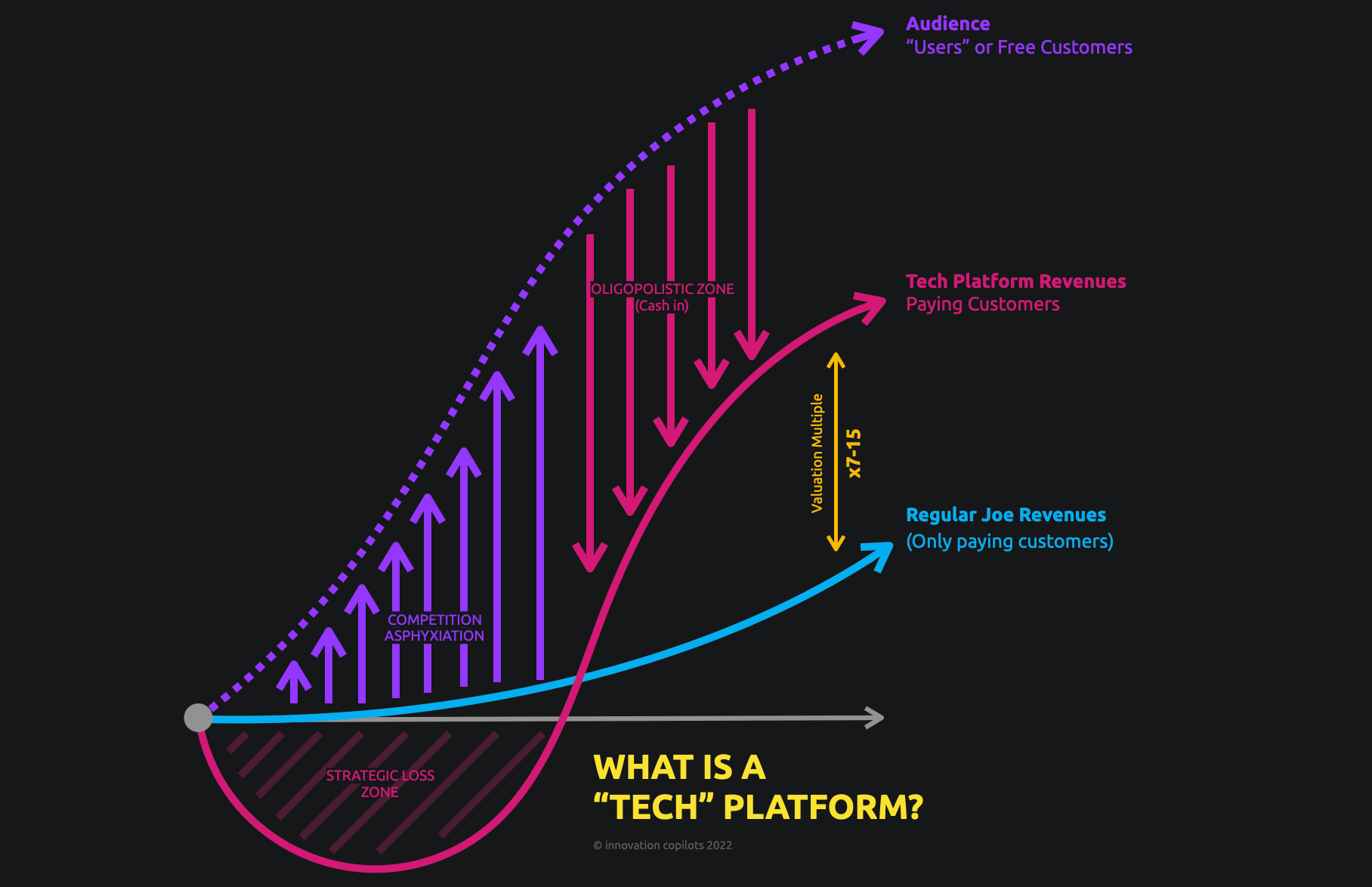

The game for these companies is simply to run down any possible competition into the ground. How so? By getting mill... billions to operate at heavy losses for years, sucking the oxygen out of the room of "regular Joe" companies that would need to make profits, and then, being the last one standing. But to do that, you can be Amazon and deal with actual warehousing and shipping physical goods, or, far better, you only deal in the fastest and most volatile of all goods: social attention.

The reasons why U.S. "tech" companies are mostly social platforms? It's what can potentially scale the fastest with powerful network effects and next to zero cost of goods sold beyond some IT and (yes) tons of marketing. In this game, there is no room to get into clinical trials or deeptech. Cancer or climate change don't have sexy valuation multiples.

And even I can still be caught off-guard by the pure cynism of Silicon Valley "tech" entrepreneurs. Yesterday's interview by Nilay Patel (The Verge) of Chris Best (Substack) for instance, left me rather... speechless.

/cdn.vox-cdn.com/uploads/chorus_asset/file/22411221/VRG_ILLO_4319_Decoder_Chris_Best_site.jpg)

For context:

Substack, founded in 2017, has about 80 employees, and raised $82.4M with VCs such as Anderseen Horowitz, Y Combinator, or Fifty Years. They never reported their financials until very recently, as they tried an IPO before canceling it, admitting along the way they were operating at a loss. Which, in their business, is standard practice. If you have enough VCs that trust you can lose money until you're the last one standing, this means big money.

They also reported back in 2021 about 1,000,000 users with paid subscriptions and that their top ten writers made a combined $20M. Don't get too hung up on these numbers, they're unverifiable.

On paper, though, Substack is one of the "good guys" as they don't monetize through ad revenues, which would mean they'd be leaning into inciting and fuelling both extreme content and aggressive online behaviors (see above: Facebook, or Twitter). Their business model is straightforward: they allow content producers to publish paying newsletters and take a cut of the transactions. Neat. I love it. Still, along the years, they ran into numerous controversies about the way they pay their writers, about anti-vax and hateful newsletters they let operate on the platform, and so on.

The thing you have to understand, is that even with a non-ad related business model, the priority is still to reach for critical mass as soon as possible. Which means that any type of extra costs, such as... I don't know... content moderation? Is a cost you want to avoid. Which in turn, translates in a cynical embrace of fuzzy libertarian free speech advocacy.

Case and point from the aforementionned interview:

Nilay Patel: When you run a social network that inherits all the expectations of a social network and people start posting that stuff and the feed is algorithmic and that’s what gets engagement, that’s a real problem for you. Have you thought about how you’re going to moderate Notes?

Chris Best: (...) The way that I think about this is, if we draw a distinction between moderation and censorship, where moderation is, “Hey, I want to be a part of a community, of a place where there’s a vibe or there’s a set of rules or there’s a set of norms or there’s an expectation of what I’m going to see or not see that is good for me, and the thing that I’m coming to is going to try to enforce that set of rules,” versus censorship, where you come and say, “Although you may want to be a part of this thing and this other person may want to be a part of it, too, and you may want to talk to each other and send emails, a third party’s going to step in and say, ‘You shall not do that. We shall prevent that.’”

CB: And I think, with the legacy social networks, the business model has pulled those feeds ever closer. There hasn’t been a great idea for how we do moderation without censorship, and I think, in a subscription network, that becomes possible.

NL: That one I’m pretty sure is just flatly against your terms of service. You would not allow that one. That’s why I picked it.

CB: So there are extreme cases, and I’m not going to get into the–

NL: Wait. Hold on. In America in 2023, that is not so extreme, right? “We should not allow as many brown people in the country.” Not so extreme. Do you allow that on Substack? Would you allow that on Substack Notes?

CB: I think the way that we think about this is we want to put the writers and the readers in charge–

NL: No, I really want you to answer that question. Is that allowed on Substack Notes? “We should not allow brown people in the country.”

CB: I’m not going to get into gotcha content moderation.

NL: This is not a gotcha... I’m a brown person. Do you think people on Substack should say I should get kicked out of the country?

CB: I’m not going to engage in content moderation, “Would you or won’t you this or that?”

NL: That one is black and white, and I just want to be clear: I’ve talked to a lot of social network CEOs, and they would have no hesitation telling me that that was against their moderation rules.

CB: Yeah. We’re not going to get into specific “would you or won’t you” content moderation questions.

NL: Why?

CB: I don’t think it’s a useful way to talk about this stuff.

What is at play here, is not a childish – or American, which quite honestly from an European perspective is quite difficult to distinguish – point of view on freedom of speech. Or how hard it is to delineate censorship from moderation.

What is at play is only this (from the same interview):

NL: I mean this runs into the, I would say, the standard Decoder questions. Substack, the last time I talked to you, was 20 people. I think it’s around 90 now. You had some layoffs, but it’s around that size. Right?

CB: Eighty.

NL: Eighty. How many of those people are trust and safety folks?

CB: A handful.

A handful...

Well, what I'm sure of is that we're keeping our newsletter on Ghost.

We set Ghost up as non-profit foundation so that it would always be true to its users, rather than shareholders or investors. Our legal constitution ensures that the company can never be bought or sold, and one hundred percent of our revenue is reinvested into the product and the community. As a public organisation we also believe in being transparent and accountable for everything we do, so we publish our live financial data for all to see. - Ghost

Further reads on Substack:

And to make sure we understand it's not only Substack:

Meanwhile...