When Star Wars will be shot on iPhone

As much as we are lead to believe, innovation is rarely straightforward and understandable. We think we get it from a TED talk or a trendy book, but it’s essentially the same as learning paragliding through YouTube. No one serious would recommend that. When I need people to understand innovation, there are tricks and shortcuts. I use them to spark an ‘A-HA’ moment —a symptom that you can shift away from your usual canned understanding of the matter to something entirely different. One such shortcut is asking « When will the next Star Wars be shot on iPhone? »

This question is obviously silly. How could a $455 M movie be shot with consumer mobile devices? So I usually need to explain. See, the Star Wars franchise was bought by Disney in 2012. It means that they are absolutely dedicated to making money out of it for the next twenty years if not thirty or fifty. And as blockbusters go, we can expect a movie in theaters every year, and maybe more when they’ll try to spin off more movies out of the main storyline.

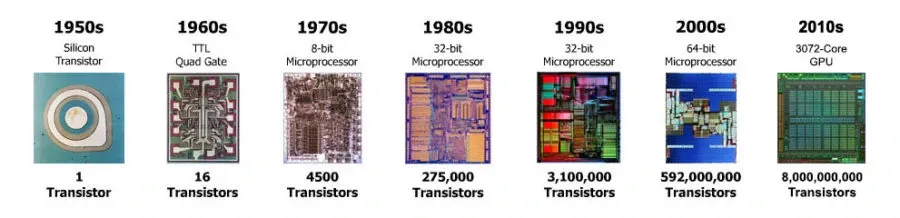

So when you think about it, Star Wars movies are a very nice metric to benchmark innovation around media technologies for quite some time. To put it in another way, we can discuss a sort of Moore’s law for shooting movies.

It would be a more complex power law, not based on a single parameter such as pixels or lumens, but on the end result: can we shoot a Star Wars exclusively with the said camera?

Seeing this perspective forces your brain to match your gut feeling (well, this is not going to happen before 2030!) to what a more reasonable thought process would produce:

- Hardware with sensor resolution and sensitivity will probably be on par in less than two years (iPhones shoot 4K already, and processing power is already essentially there);

- Optics are a tough nut to crack, but they could be virtualized within 2-3 years (iOS camera software starts to emulate the bokeh effect crudely and iPhone 7+ already has optics to facilitate software image building);

- Star Wars movies are also moving in their direction with more and more virtual shooting, and unbeknownst to you, iPads are already part of the processing chain (with truckloads of proprietary software for sure):

#Andor premieres in August

— Culture Crave 🍿 (@CultureCrave) May 26, 2022

It will have 12 episodes and is set 5 years before #RogueOne pic.twitter.com/c8uT3vyQmW

I could detail further how dots are connecting much faster than our common sense would tell us. But this is not to make a point about Apple (and I’m usually bad at predicting the future anyway).

This is about getting innovation right, and in this example, we have three critical learnings about innovation:

If there’s technology involved, there’s always Moore’s law at play, usually several of them. Find them. Understand what sustains them. Pinpoint what would be the real limiting factor that will eventually set the global pace of change.

What you think of the product is usually wrong. And that’s because you think of the product in a superficial way and would say, « The camera will never be good enough! » when it’s more about the software than the hardware.

Innovation never happens from a single direction. Even if you don’t believe that iPhone's technology will catch up within three years, the underlying market is evolving by itself. Movies are shot in a very different way than when the first Star Wars happened in 1977. The way a 2017 Star Wars movie is shot today starts to converge rapidly to where Apple is headed for entirely different reasons:

If this all starts to make more sense to you, you can play this game with different markets: automotive, energy, banking, insurance, etc. This is a very nice exercise to sharpen your mind at innovation thinking.

But for movies (if not for Star Wars yet)? We’re already there ⤵️

EDIT: A link to Frédéric FILLOUX's Sep. 5 article on why camera makers should adopt Android.