AI research in healthcare? It's a mess

Lately, a few sobering research papers have been published about AI (artificial intelligence) research in healthcare.

Research on research might seem a vain redundancy. Maybe, but since 2015 there's been a massive wake-up call in the scientific community as we found that many research results couldn't be replicated.

Replicating a research result is the cornerstone of science. Publish your results and the methodology you used, and anyone else should be able to get the same result following your methodology.

Regarding the last ten years of AI experimentations in various medicine sectors (from analyzing X-rays to offering early-stage carcinoma detection or optimizing postoperative surgeries), it seems that many researchers shot from the hip instead of painstakingly laying out a solid scientific foundation.

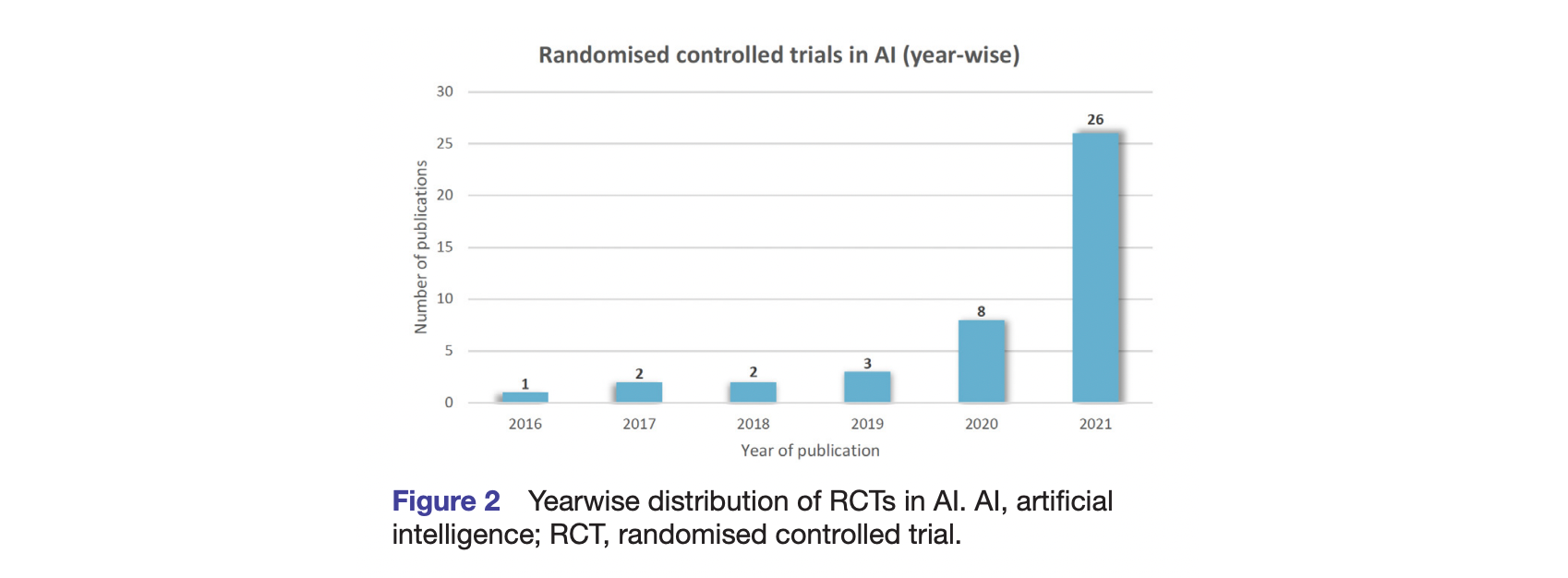

The main critique? The sheer lack of randomized control trials (RCTs).

Out of 19,737 research articles published on AI research in healthcare, only 41 used randomized control trials [1]. And it gets worse, as most did not even follow the specific medical machine learning research standards defined by Nature Medicine, the British Medical Journal, and the Lancet.

If this can get possibly worse, the patient sample size would range from twenty-two to a few thousand among the few research protocols that adhered to RCTs [2]. I let you pause on the statistical significance of a sample size of twenty-two.

From this point in this article, there should be a long discussion on why as "medical devices," some AI don't need high-level randomized, double-blind protocols. But more importantly, it's another example of how regulatory bodies (whether the FDA or the CE approval in Europe) lag behind the way innovators push into the market.

To be clear, this is disastrous. Mainly when unproven technologies such as AI fueled by a lot of venture capital expectations enter a highly demanding field of research such as medicine.

In that regard, the adjacent market of foodtech has been way more nimble and adaptative, proving that connecting the deep layer of "laws" and the critically fast layer of "innovation" is not a lost cause.

[1] Deborah Plana, Dennis L. Shung, Alyssa A. Grimshaw, et al. Randomized Clinical Trials of Machine Learning Interventions in Health CareA Systematic Review. JAMA Netw Open. 2022;5(9):e2233946. doi:10.1001/jamanetworkopen.2022.33946

[2] Shahzad R, Ayub B, Siddiqui MAR. Quality of reporting of randomised controlled trials of artificial intelligence in healthcare: a systematic review. BMJ Open 2022;12:e061519. doi:10.1136/ bmjopen-2022-061519.